The other day one of our NetScaler appliances was unable to boot up after a power down. It was getting stuck during the FreeBSD bootup phase (before the NetScaler software actually loads) with the error:

Fatal trap 9: general protection fault while in kernel mode

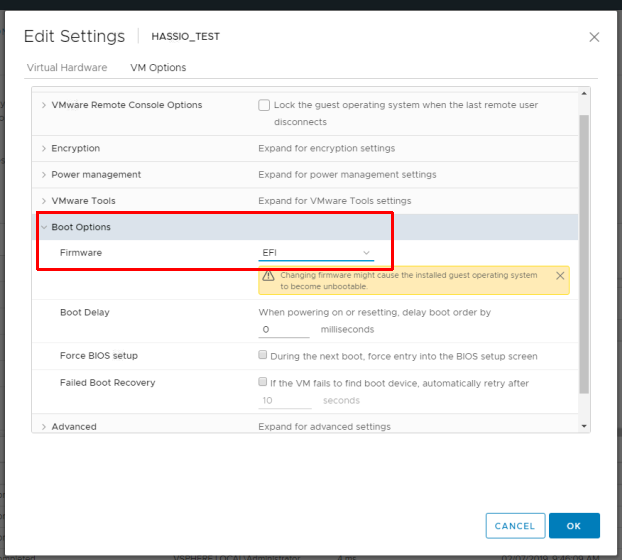

The only information I could find on this specific issue was here: https://support.citrix.com/article/CTX238252, but this was not relevant to us. I could not find anything else online talking about receiving this error on a NetScaler appliance. Restoring to previous snapshots of the appliance didn’t resolve the issue. After some digging I found that this VM was set to the highest VM compatibility level. At some point someone had set the comparability level of the VM to be upgraded to version 15, but this didn’t take effect until the VM was actually powered down (it had been rebooted many times since without issues).

To remediate this issue I did the following:

- Removed the VM from inventory

- Manually edited the vmx file ‘virtualHW.version‘ line to say virtualHW.version = “4”. I chose a lower version, so that I could use the GUI to upgrade the version later. This can be done using WinSCP or something similar to download/edit the file

- Added VM back to inventory

- Upgraded VM compatibility to version 7 in vCenter to let the system actually run through the VMX and check settings

After doing all of the above I was able to successfully boot up the NetScaler appliance. The main takeaway here is that the ‘fatal trap’ error was directly related to the VM compatibility setting in ESXi in this particular case.